If you haven’t noticed, there is a new book out that lambasts value-added measures, those statistics used to estimate the impact that a teacher has on his or her students’ test scores. The book says value-added measurement (VAM) is a “weapon of math destruction.” My colleague Alex Resch has pointed out some of the book’s holes in logic and evidence. To be sure, warning about the dangers of misusing data analytics is a good idea. But I don’t think of VAM as a weapon of mass destruction, like a nuclear bomb or anthrax.

Rather, as Andy Rotherham pointed out a few years ago, it’s more like a chainsaw. (Note that we should also stop calling it “value added.”) On the one hand, value-added measures, like chainsaws, can be powerful tools and really useful for the right problem, like cutting down a tree. On the other hand, chainsaws, like VAMs, can do a lot of damage if you’re not careful and don’t know what you’re doing.

The anti-chainsaw crowd will show you gruesome photos of accidents with severed human limbs. Chainsaws can’t be trusted! They are a force of destruction!

The anti-chainsaw crowd will show you gruesome photos of accidents with severed human limbs. Chainsaws can’t be trusted! They are a force of destruction!

But the chainsaw salesman, working on commission and eager to sell the tool, might not spend enough time with you on the safety procedures, or warn you if maybe you’re not ready for this, or provide you with alternatives to tree removal.

At Mathematica, we don’t want to be like the doubters, saying don’t use the tools at all, nor do we want to be the pushy salespeople who minimize the risks of misusing the tool. The Educator Impact Lab developed a “Guide to Practicing Safe VAM” to make sure our researchers and programmers are aware of the limitations and necessary precautions before we undertake this kind of work for real live teachers and principals. We are working on a public version for researchers and district leaders.

One aspect we wrestle with as researchers is how to provide results to educators. Most researchers will know that a model like this produces two things for every teacher and subject: a summary score (called a “point estimate”) and a measure of how confident we are in that score (a range derived from the statistic known as the “standard error”). It’s tempting to ignore the standard error and just use the score. After all, it’s confusing to stakeholders who aren’t familiar with confidence intervals or hypothesis tests and don’t like their information provided as probabilistic statements. They want to know “what’s my score?”, not “what is the random interval that will contain my true score 95 percent of the time?” Our job as researchers who work with policymakers is to help find ways to transform that range of possible scores into something that decision makers can understand and use and not throw away like the weird-looking blade protector on your chainsaw.

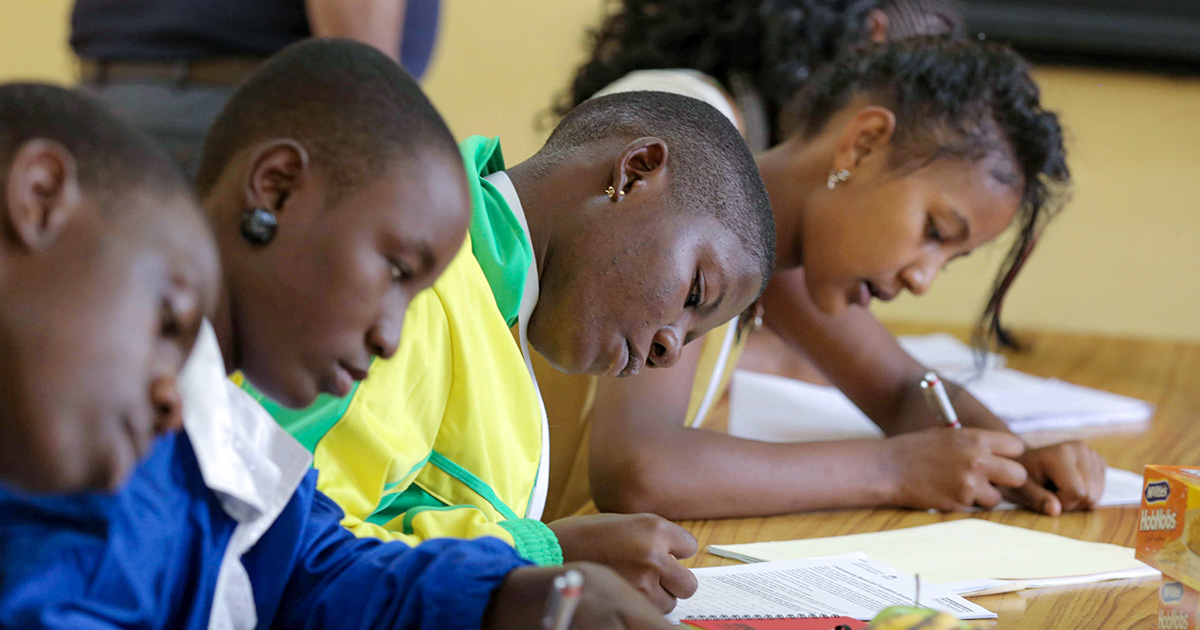

It’s important that we solve these problems of how to use VAMs carefully and not discard data, even when there is a large amount of uncertainty. Often there are examples of teachers who are working quiet miracles with students in struggling situations, starting off the year way behind, and catching them up more than any other teacher would under similar circumstances. Being able to clear the underbrush so we can better search, with other tools, for teachers making a real difference, is worth it. Value-added measures are a critical tool to help us find out who these teachers are, so their contributions don’t remain invisible.