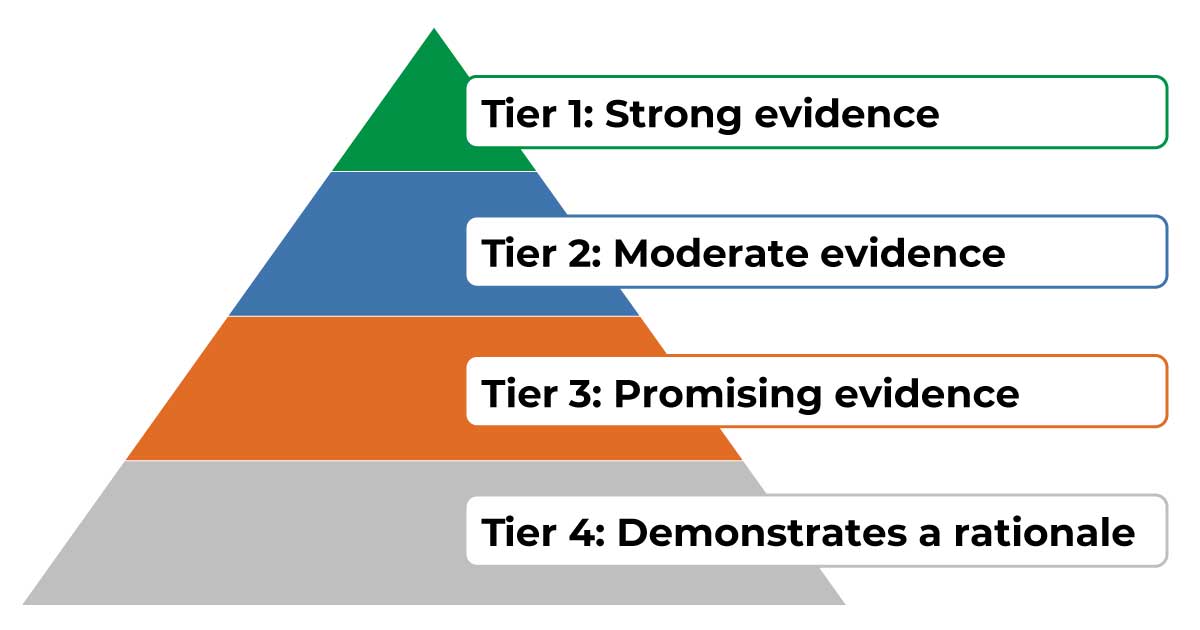

The Every Student Succeeds Act (ESSA) encourages schools and districts to use evidence-based interventions and practices, with tiers of evidence based on the strength and rigor of the research supporting the effectiveness of the intervention or practice in improving student outcomes. The American Rescue Plan (ARP) has boosted the importance of the evidence tiers by requiring that 20 percent of the associated funds districts spend must meet one of the tiers. An intervention backed by research might meet the requirements of one of the top three evidence tiers, depending on the rigor of the research.

Tier 4 interventions must be supported by a well-defined logic model. Refer to this REL Pacific tool and slides 23-30 of this ESSA tiers training from the Institute of Education Sciences to learn about logic models and how to create them.

But ESSA also recognizes that many promising interventions have not been rigorously studied, and that requiring rigorous evidence for all interventions would make innovation impossible. That’s why ESSA includes a fourth tier. Tier 4 interventions do not need to be supported by results from a previous study so long as they include a logic model based on high-quality research. This gives educators the flexibility to innovate, developing approaches they believe will be successful in their local context. Because lots of innovative interventions have not yet been carefully studied, Tier 4 is potentially very useful. But because Tier 4 interventions are not supported by strong prior research, they should include a plan to study their effectiveness. By including a plan to study the interventions they are implementing, schools and districts not only can meet expectations of the American Rescue Plan, but also contribute valuable knowledge to the evidence base of what works in education.

Collecting evidence on whether an intervention is succeeding can be a challenge. One of the biggest stumbling blocks is a study design that can give you confidence that any change in outcomes results from the intervention itself (rather than from other factors). If reading proficiency improved after you implemented a supplemental reading program, how do you know the improvement wasn’t caused by something else?

Using a comparison group is the best way to demonstrate that any change in student outcomes results from the intervention itself. The biggest challenge is finding an appropriate comparison group, so that it is possible to compare results for those who participate in the intervention with those who do not. This can pose operational challenges because educators and policymakers typically want to give promising interventions to everyone. But there really isn’t any good way to know whether an intervention has worked without creating some sort of comparison group that does not get the intervention.

Here are three ways you can create a comparison group:

- Randomly assign students or teachers to treatment and comparison groups before you begin to implement the intervention.

A randomized experiment—the gold standard in research design—uses a lottery to determine who is in the treatment group and who is in the comparison group. After the treatment group receives the intervention, you can compare student outcomes across the treatment and comparison groups to analyze whether the intervention is working. The treatment group receives the intervention first, and other students may receive it later. (This is helpful if you would like to use a small-scale test to see whether your intervention is effective before rolling it out on a larger scale.) The free Evidence to Insights Coach, or e2i Coach, is a digital tool that helps educators generate rigorous evidence and can help you with the randomization process. Using a randomized experimental design can also make your work eligible for addition to the What Works Clearinghouse in one of the higher tiers of evidence!

- Use a matched comparison approach.

To identify your matched comparison group, we suggest partnering with district or state education agency personnel with data or statistics experience. The e2i Coach can also identify matched comparison groups.

If you can’t randomize—or if your intervention is already underway—you can identify a “matched comparison” group. This involves looking at administrative data to select a group of students who are not receiving the intervention but are similar to the students who are. The comparison group students should be in the same school or district as students receiving the intervention and should be similar to them in terms of demographics and achievement levels before the start of the intervention. Like randomization, comparing outcomes in the intervention group to those in the “matched comparison” group helps ensure that any changes resulted from the intervention rather than from other factors. The e2i Coach can help with analyzing data for matched comparison groups as well as for randomized studies.

- Compare outcomes for students just above and below a threshold that triggers an intervention.

Let’s say your school district refers students to an attendance intervention if they are absent for 10 or more days in a single semester. You can compare outcomes for students who just barely made it into the intervention group (10 or 11 absences) to similar students in the comparison group (8 or 9 absences). This method is called a regression discontinuity design.

Although collecting rigorous evidence may be challenging, it pays off over time. It will help you determine whether your interventions are working and decide how to invest in the most effective approaches for your students. Along the way, you may produce research that falls under ESSA’s Tiers 1, 2, or 3, spreading evidence on what works in education across the country.

Cross-posted from the REL Mid-Atlantic website.