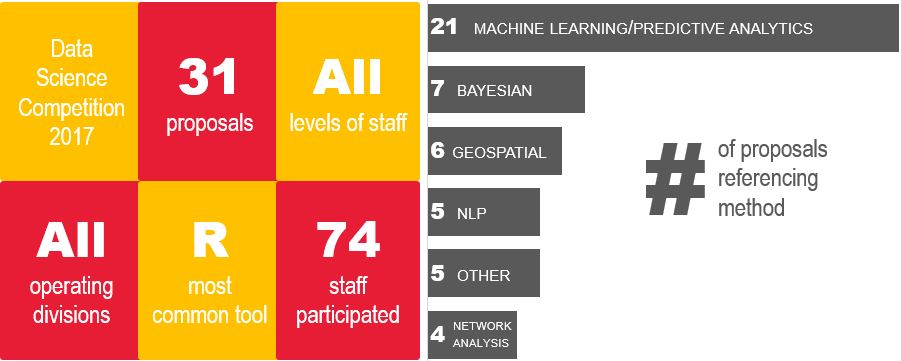

My Mathematica colleagues and I are committed to finding innovative ways to answer critical policy questions. We’re also an incredibly competitive group (you should see the heated Ultimate Frisbee games on Friday mornings outside our DC office!). So we recently sought to channel those forces as part of our efforts to integrate new frontiers in data science with our traditional policy research and program improvement work.

To set the context for this competition, let’s clarify what we mean by data science. The development of this field is being driven by several catalysts, including:

- Big Data. The days of working with a snapshot of administrative data or relying on costly data collection are coming to an end. Big Data can provide program administrators with the volume, velocity, and variety of information required to make timely and accurate data-driven decisions.

- Finite resources. Human capital is expensive and must be optimized to take advantage of every dollar spent to improve public well-being. Advanced analytic methods such as predictive modeling and machine learning can focus these resources where the return on investment is the greatest.

- Large-scale data processing. The introduction of ubiquitous cloud resources and advances in parallel processing have opened the door to processing of huge volumes of data using computer-intensive methods that were not possible just a few years ago. Such advances in computing power can transform how policymakers and researchers approach program policies and improvements.

- New methodologies. New methods can harness Big Data and use the available computing power to enable better analysis and prediction of outcomes. Advanced methods like Bayesian analysis, machine learning, and geospatial visualization can provide more accurate and insightful results to traditional problems.

Traditional and Cutting-Edge Perspectives

- Identify creative uses of available data and methods to solve program and policy problems

- Harness cross-functional project teams that include subject matter experts and data scientists

- Demonstrate proof-of-concept results that can serve to inform our clients and peers about effective ways to facilitate integration of data science into program operations and evaluation

- What if we examined the network structure of a power grid to improve reliability? One proposal looked at how to exploit the hierarchical network structure of the power grid of an African nation to determine the optimal placement of a finite number of real-time data monitors to sample key grid performance outcomes. This proposal brought together network analysis, graph theory, optimization, and geospatial visualizations.

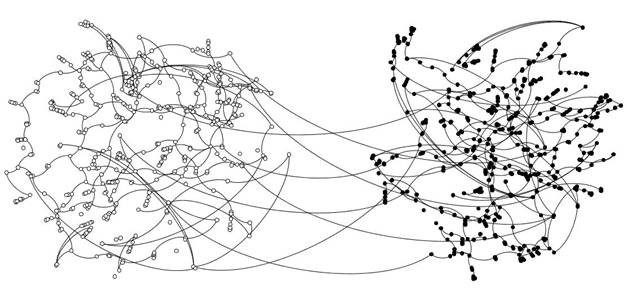

- Can linked data reduce urban crime and violence? One proposal explored the problem of urban crime and violence through creative linking of city-managed data sources; it would use network and cluster analysis to understand the collaboration network representing client sharing among 24 violence prevention programs and the impact of various combinations of services on outcomes. The objective is to understand whether clients access services from multiple programs, the extent of variation in the dosage of services, and whether services target those at highest risk of violence.

- How does a neighborhood determine if it has sufficient social service capacity? A similar proposal looked to evaluate whether urban neighborhoods have sufficient capacity to adequately address community needs related to crime and lead poisoning of children, and assessing whether a neighborhood has sufficient resource capacity to implement and sustain interventions. This is a critical step to determine whether programs aimed at improving neighborhood vitality will be successful. This project leverages machine learning, geospatial analysis, and geospatial visualization methods.

- Can we predict greenhouse gas emissions? A climate change proposal addressed development of a predictive model applying a Bayesian approach to estimate the greenhouse gas emissions for all cities with more than 300,000 residents in the United States and Brazil using geospatial analysis that draws on national and city data.

- Could we reduce the number of opioid deaths by predicting overdoses? A proposal addressed the opioid epidemic using advanced analytics and Bayesian methods to synthesize public health and law enforcement data to parse out illicit versus legitimate use, identify characteristics of users, and use this information to predict fatal overdoses. Helping public health and law enforcement officials predict rather than react to changes in opioid abuse will enable better targeting of resources.

- Can we save time and money by automating more processes? We had four proposals for adapting natural language processing to automatically categorize the contents of dense administrative text, freeing up staff from manually performing those tasks. For example, occupational information of past jobs can predict the probability of returning to the labor force and engaging in substantial gainful activity. However, in both administrative and survey data, occupational information is often collected as an open-ended item text field. The process of reading this text and assigning labor occupation categories has been completed manually by trained coders. This process is labor intensive, time consuming, and can be cost prohibitive. We can use a supervised machine learning approach to code labor occupation categories, providing the ability to quickly code data for millions of administrative records and improve the quality and consistency of coding relative to manual coding.

Data science is on the cusp of widespread adoption as a critical component of policy research and program support. Ideas such as the ones generated in our competition will help the research community stay on the forefront of this wave and bring innovative solutions to complex problems facing decision makers across the globe.